Neurosymbolic Reinforcement Learning and Planning: A Survey

Paper and Code

Sep 02, 2023

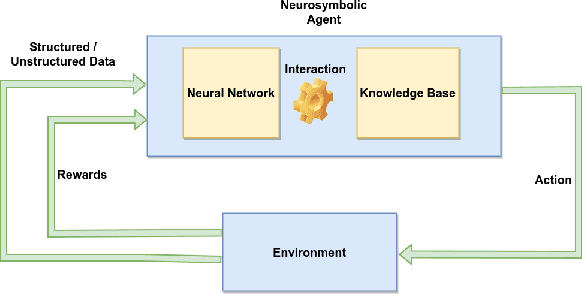

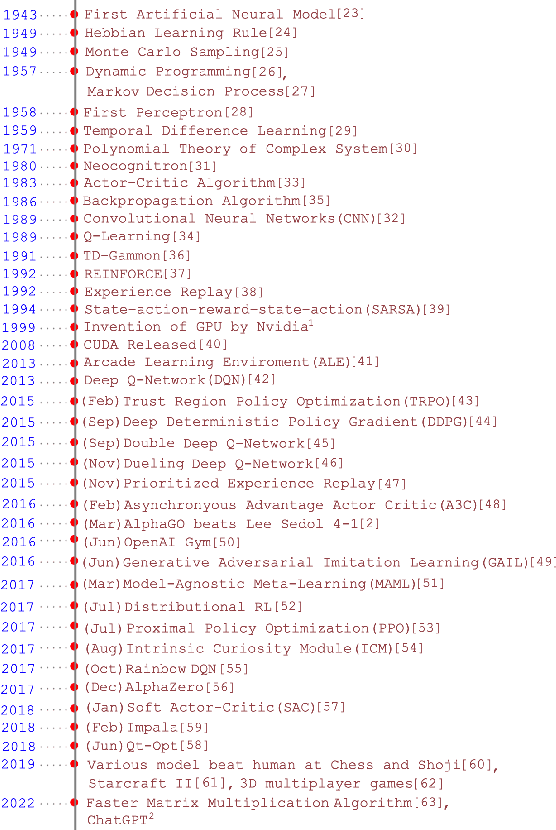

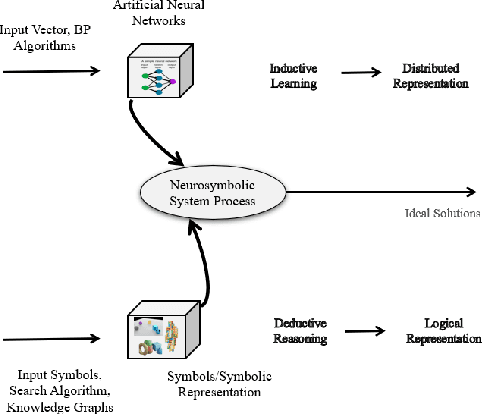

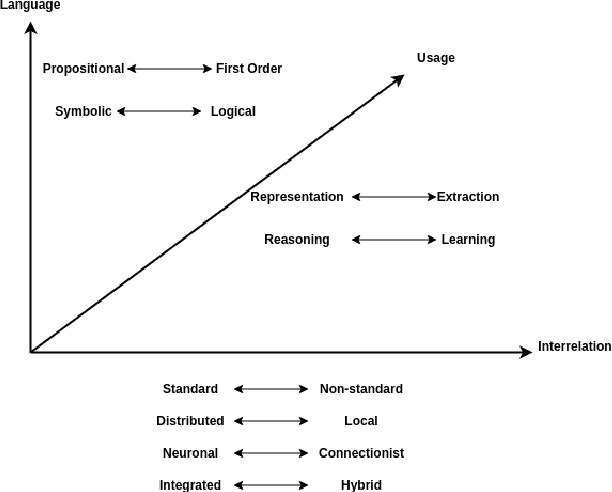

The area of Neurosymbolic Artificial Intelligence (Neurosymbolic AI) is rapidly developing and has become a popular research topic, encompassing sub-fields such as Neurosymbolic Deep Learning (Neurosymbolic DL) and Neurosymbolic Reinforcement Learning (Neurosymbolic RL). Compared to traditional learning methods, Neurosymbolic AI offers significant advantages by simplifying complexity and providing transparency and explainability. Reinforcement Learning(RL), a long-standing Artificial Intelligence(AI) concept that mimics human behavior using rewards and punishment, is a fundamental component of Neurosymbolic RL, a recent integration of the two fields that has yielded promising results. The aim of this paper is to contribute to the emerging field of Neurosymbolic RL by conducting a literature survey. Our evaluation focuses on the three components that constitute Neurosymbolic RL: neural, symbolic, and RL. We categorize works based on the role played by the neural and symbolic parts in RL, into three taxonomies:Learning for Reasoning, Reasoning for Learning and Learning-Reasoning. These categories are further divided into sub-categories based on their applications. Furthermore, we analyze the RL components of each research work, including the state space, action space, policy module, and RL algorithm. Additionally, we identify research opportunities and challenges in various applications within this dynamic field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge