MuSLCAT: Multi-Scale Multi-Level Convolutional Attention Transformer for Discriminative Music Modeling on Raw Waveforms

Paper and Code

Apr 06, 2021

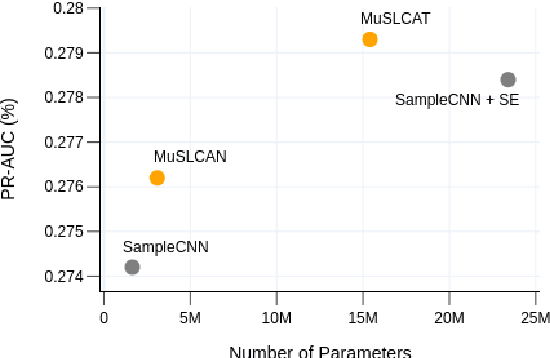

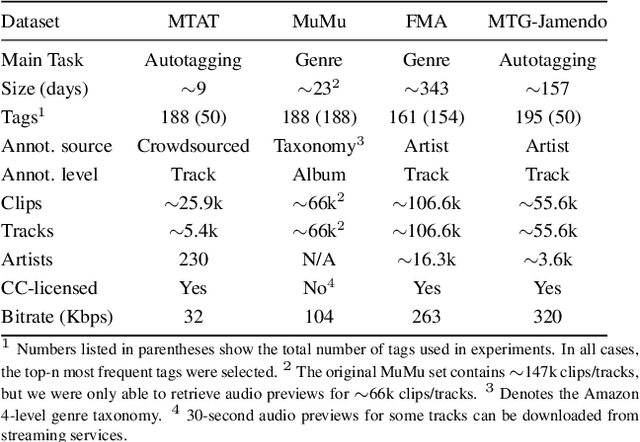

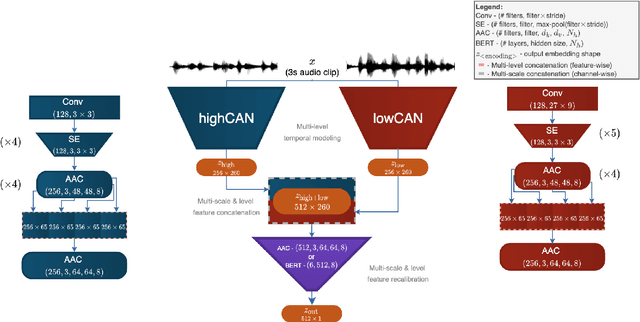

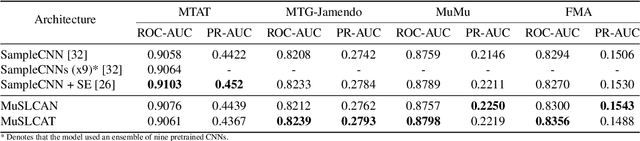

In this work, we aim to improve the expressive capacity of waveform-based discriminative music networks by modeling both sequential (temporal) and hierarchical information in an efficient end-to-end architecture. We present MuSLCAT, or Multi-scale and Multi-level Convolutional Attention Transformer, a novel architecture for learning robust representations of complex music tags directly from raw waveform recordings. We also introduce a lightweight variant of MuSLCAT called MuSLCAN, short for Multi-scale and Multi-level Convolutional Attention Network. Both MuSLCAT and MuSLCAN model features from multiple scales and levels by integrating a frontend-backend architecture. The frontend targets different frequency ranges while modeling long-range dependencies and multi-level interactions by using two convolutional attention networks with attention-augmented convolution (AAC) blocks. The backend dynamically recalibrates multi-scale and level features extracted from the frontend by incorporating self-attention. The difference between MuSLCAT and MuSLCAN is their backend components. MuSLCAT's backend is a modified version of BERT. While MuSLCAN's is a simple AAC block. We validate the proposed MuSLCAT and MuSLCAN architectures by comparing them to state-of-the-art networks on four benchmark datasets for music tagging and genre recognition. Our experiments show that MuSLCAT and MuSLCAN consistently yield competitive results when compared to state-of-the-art waveform-based models yet require considerably fewer parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge