Multimodal Shared Autonomy for Social Navigation Assistance of Telepresence Robots

Paper and Code

Oct 17, 2022

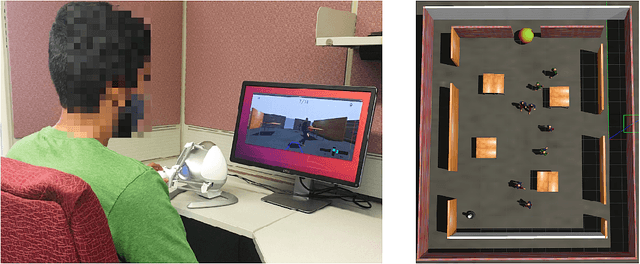

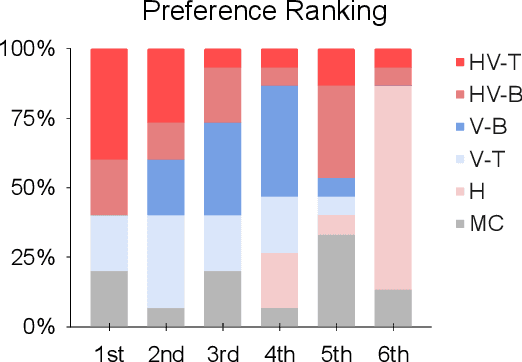

Mobile telepresence robots (MTRs) have become increasingly popular in the expanding world of remote work, providing new avenues for people to actively participate in activities at a distance. However, humans operating MTRs often have difficulty navigating in densely populated environments due to limited situation awareness and narrow field-of-view, which reduces user acceptance and satisfaction. Shared autonomy in navigation has been studied primarily in static environments or in situations where only one pedestrian interacts with the robot. We present a multimodal shared autonomy approach, leveraging visual and haptic guidance, to provide navigation assistance for remote operators in densely-populated environments. It uses a modified form of reciprocal velocity obstacles for generating safe control inputs while taking social proxemics constraints into account. Two different visual guidance designs, as well as haptic force rendering, were proposed to convey safe control input. We conducted a user study to compare the merits and limitations of multimodal navigation assistance to haptic or visual assistance alone on a shared navigation task. The study involved 15 participants operating a virtual telepresence robot in a virtual hall with moving pedestrians, using the different assistance modalities. We evaluated navigation performance, transparency and cooperation, as well as user preferences. Our results showed that participants preferred multimodal assistance with a visual guidance trajectory over haptic or visual modalities alone, although it had no impact on navigation performance. Additionally, we found that visual guidance trajectories conveyed a higher degree of understanding and cooperation than equivalent haptic cues in a navigation task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge