MCCE: Missingness-aware Causal Concept Explainer

Paper and Code

Nov 14, 2024

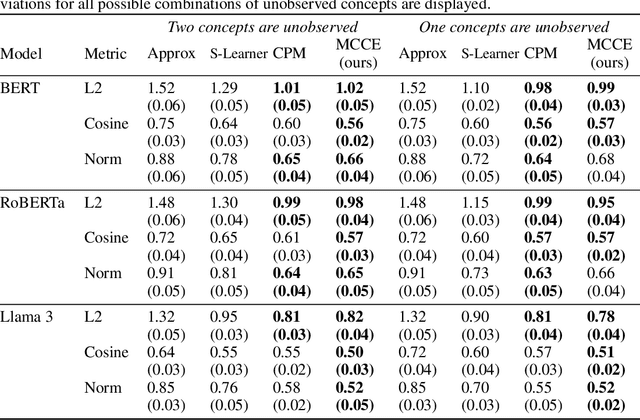

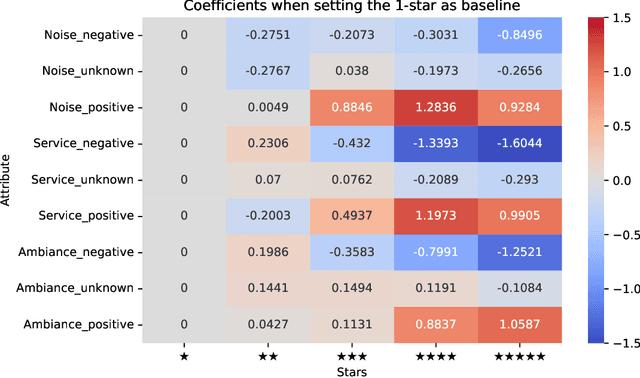

Causal concept effect estimation is gaining increasing interest in the field of interpretable machine learning. This general approach explains the behaviors of machine learning models by estimating the causal effect of human-understandable concepts, which represent high-level knowledge more comprehensibly than raw inputs like tokens. However, existing causal concept effect explanation methods assume complete observation of all concepts involved within the dataset, which can fail in practice due to incomplete annotations or missing concept data. We theoretically demonstrate that unobserved concepts can bias the estimation of the causal effects of observed concepts. To address this limitation, we introduce the Missingness-aware Causal Concept Explainer (MCCE), a novel framework specifically designed to estimate causal concept effects when not all concepts are observable. Our framework learns to account for residual bias resulting from missing concepts and utilizes a linear predictor to model the relationships between these concepts and the outputs of black-box machine learning models. It can offer explanations on both local and global levels. We conduct validations using a real-world dataset, demonstrating that MCCE achieves promising performance compared to state-of-the-art explanation methods in causal concept effect estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge