Mask CycleGAN: Unpaired Multi-modal Domain Translation with Interpretable Latent Variable

Paper and Code

May 14, 2022

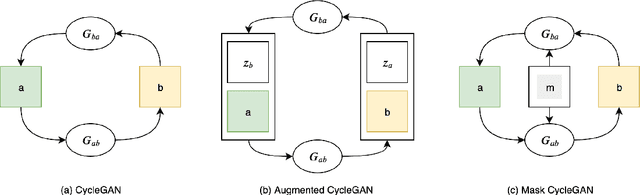

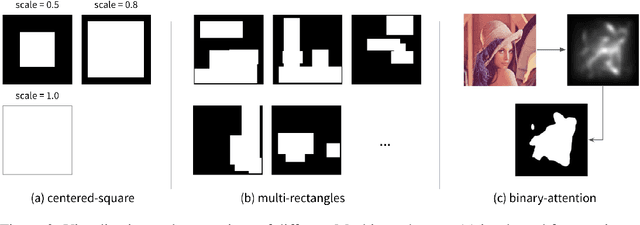

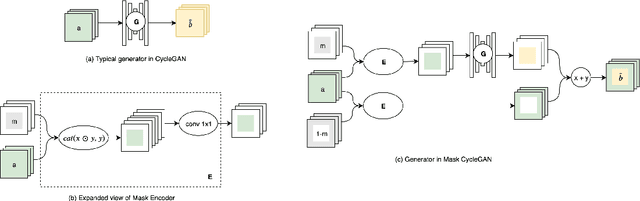

We propose Mask CycleGAN, a novel architecture for unpaired image domain translation built based on CycleGAN, with an aim to address two issues: 1) unimodality in image translation and 2) lack of interpretability of latent variables. Our innovation in the technical approach is comprised of three key components: masking scheme, generator and objective. Experimental results demonstrate that this architecture is capable of bringing variations to generated images in a controllable manner and is reasonably robust to different masks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge