Learning Relaxation for Multigrid

Paper and Code

Jul 25, 2022

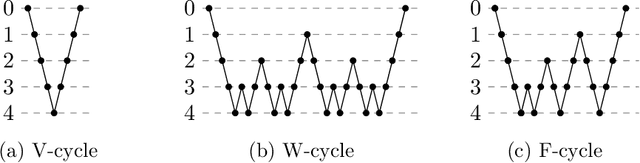

During the last decade, Neural Networks (NNs) have proved to be extremely effective tools in many fields of engineering, including autonomous vehicles, medical diagnosis and search engines, and even in art creation. Indeed, NNs often decisively outperform traditional algorithms. One area that is only recently attracting significant interest is using NNs for designing numerical solvers, particularly for discretized partial differential equations. Several recent papers have considered employing NNs for developing multigrid methods, which are a leading computational tool for solving discretized partial differential equations and other sparse-matrix problems. We extend these new ideas, focusing on so-called relaxation operators (also called smoothers), which are an important component of the multigrid algorithm that has not yet received much attention in this context. We explore an approach for using NNs to learn relaxation parameters for an ensemble of diffusion operators with random coefficients, for Jacobi type smoothers and for 4Color GaussSeidel smoothers. The latter yield exceptionally efficient and easy to parallelize Successive Over Relaxation (SOR) smoothers. Moreover, this work demonstrates that learning relaxation parameters on relatively small grids using a two-grid method and Gelfand's formula as a loss function can be implemented easily. These methods efficiently produce nearly-optimal parameters, thereby significantly improving the convergence rate of multigrid algorithms on large grids.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge