Lattice Representation Learning

Paper and Code

Jun 24, 2020

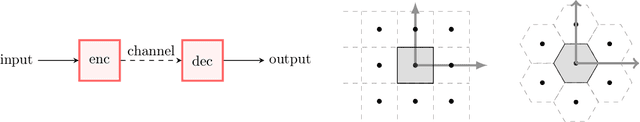

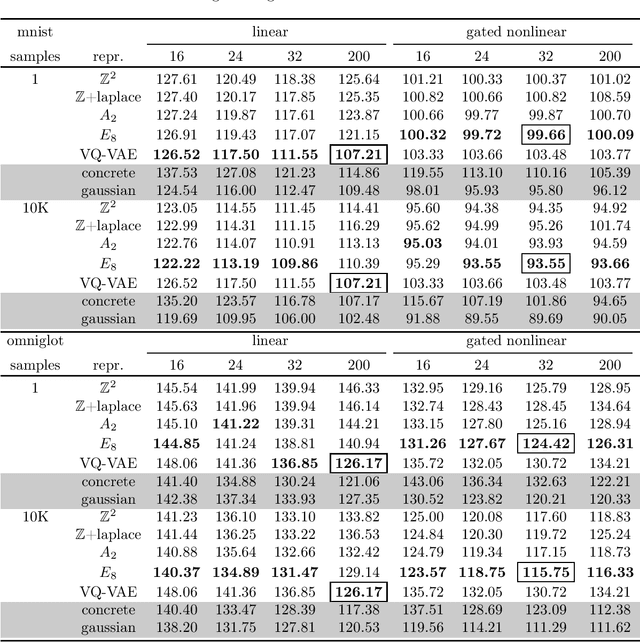

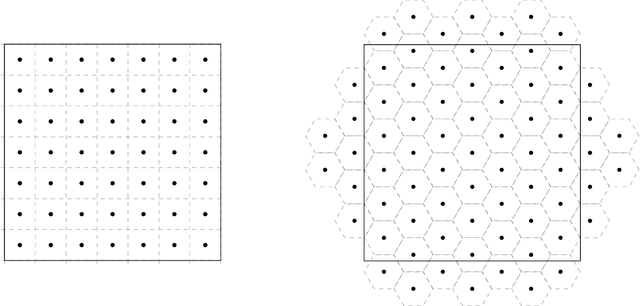

In this article we introduce theory and algorithms for learning discrete representations that take on a lattice that is embedded in an Euclidean space. Lattice representations possess an interesting combination of properties: a) they can be computed explicitly using lattice quantization, yet they can be learned efficiently using the ideas we introduce in this paper, b) they are highly related to Gaussian Variational Autoencoders, allowing designers familiar with the latter to easily produce discrete representations from their models and c) since lattices satisfy the axioms of a group, their adoption can lead into a way of learning simple algebras for modeling binary operations between objects through symbolic formalisms, yet learn these structures also formally using differentiation techniques. This article will focus on laying the groundwork for exploring and exploiting the first two properties, including a new mathematical result linking expressions used during training and inference time and experimental validation on two popular datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge