Improving Quality of Hierarchical Clustering for Large Data Series

Paper and Code

Aug 03, 2016

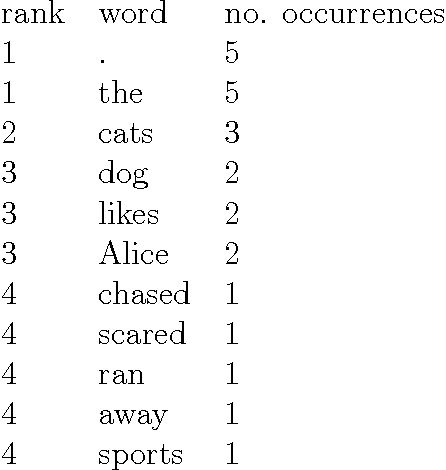

Brown clustering is a hard, hierarchical, bottom-up clustering of words in a vocabulary. Words are assigned to clusters based on their usage pattern in a given corpus. The resulting clusters and hierarchical structure can be used in constructing class-based language models and for generating features to be used in NLP tasks. Because of its high computational cost, the most-used version of Brown clustering is a greedy algorithm that uses a window to restrict its search space. Like other clustering algorithms, Brown clustering finds a sub-optimal, but nonetheless effective, mapping of words to clusters. Because of its ability to produce high-quality, human-understandable cluster, Brown clustering has seen high uptake the NLP research community where it is used in the preprocessing and feature generation steps. Little research has been done towards improving the quality of Brown clusters, despite the greedy and heuristic nature of the algorithm. The approaches tried so far have focused on: studying the effect of the initialisation in a similar algorithm; tuning the parameters used to define the desired number of clusters and the behaviour of the algorithm; and including a separate parameter to differentiate the window from the desired number of clusters. However, some of these approaches have not yielded significant improvements in cluster quality. In this thesis, a close analysis of the Brown algorithm is provided, revealing important under-specifications and weaknesses in the original algorithm. These have serious effects on cluster quality and reproducibility of research using Brown clustering. In the second part of the thesis, two modifications are proposed. Finally, a thorough evaluation is performed, considering both the optimization criterion of Brown clustering and the performance of the resulting class-based language models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge