General factorization framework for context-aware recommendations

Paper and Code

May 19, 2015

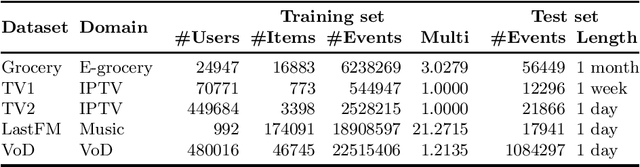

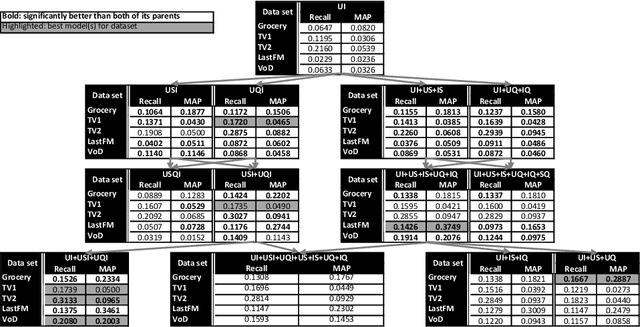

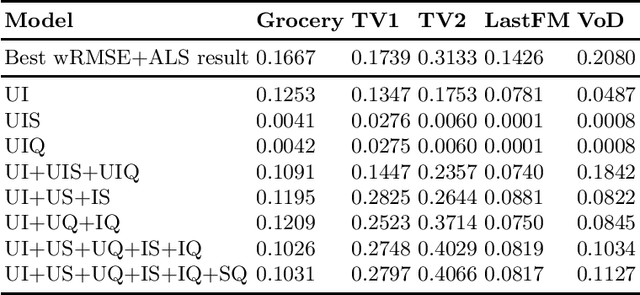

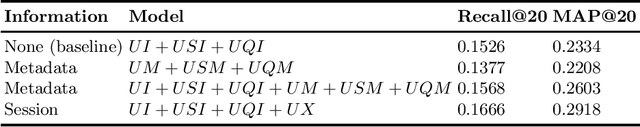

Context-aware recommendation algorithms focus on refining recommendations by considering additional information, available to the system. This topic has gained a lot of attention recently. Among others, several factorization methods were proposed to solve the problem, although most of them assume explicit feedback which strongly limits their real-world applicability. While these algorithms apply various loss functions and optimization strategies, the preference modeling under context is less explored due to the lack of tools allowing for easy experimentation with various models. As context dimensions are introduced beyond users and items, the space of possible preference models and the importance of proper modeling largely increases. In this paper we propose a General Factorization Framework (GFF), a single flexible algorithm that takes the preference model as an input and computes latent feature matrices for the input dimensions. GFF allows us to easily experiment with various linear models on any context-aware recommendation task, be it explicit or implicit feedback based. The scaling properties makes it usable under real life circumstances as well. We demonstrate the framework's potential by exploring various preference models on a 4-dimensional context-aware problem with contexts that are available for almost any real life datasets. We show in our experiments -- performed on five real life, implicit feedback datasets -- that proper preference modelling significantly increases recommendation accuracy, and previously unused models outperform the traditional ones. Novel models in GFF also outperform state-of-the-art factorization algorithms. We also extend the method to be fully compliant to the Multidimensional Dataspace Model, one of the most extensive data models of context-enriched data. Extended GFF allows the seamless incorporation of information into the fac[truncated]

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge