Exploring the Statistical Derivation of Transformational Rule Sequences for Part-of-Speech Tagging

Paper and Code

Jun 03, 1994

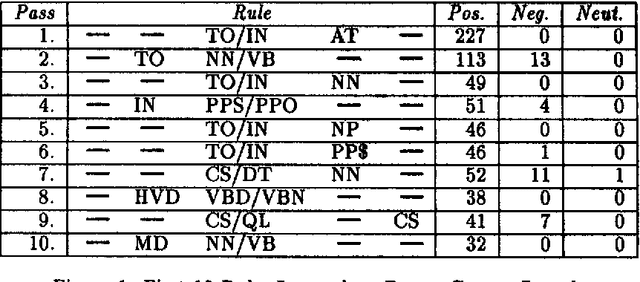

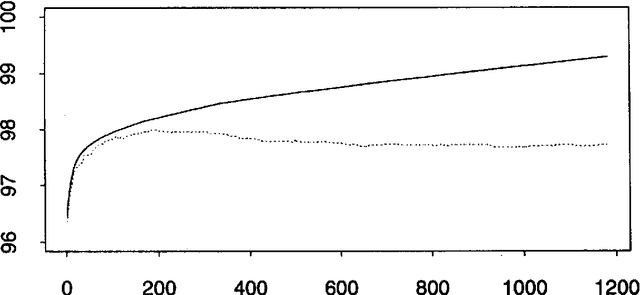

Eric Brill has recently proposed a simple and powerful corpus-based language modeling approach that can be applied to various tasks including part-of-speech tagging and building phrase structure trees. The method learns a series of symbolic transformational rules, which can then be applied in sequence to a test corpus to produce predictions. The learning process only requires counting matches for a given set of rule templates, allowing the method to survey a very large space of possible contextual factors. This paper analyses Brill's approach as an interesting variation on existing decision tree methods, based on experiments involving part-of-speech tagging for both English and ancient Greek corpora. In particular, the analysis throws light on why the new mechanism seems surprisingly resistant to overtraining. A fast, incremental implementation and a mechanism for recording the dependencies that underlie the resulting rule sequence are also described.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge