Effectiveness of Hierarchical Softmax in Large Scale Classification Tasks

Paper and Code

Dec 13, 2018

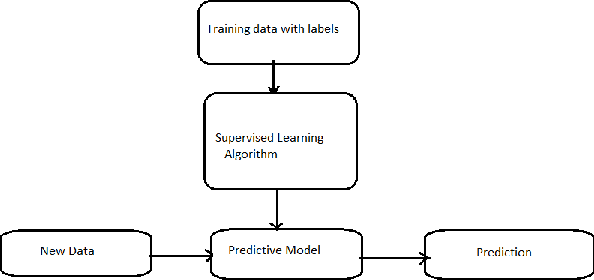

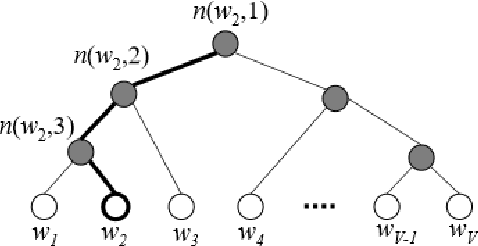

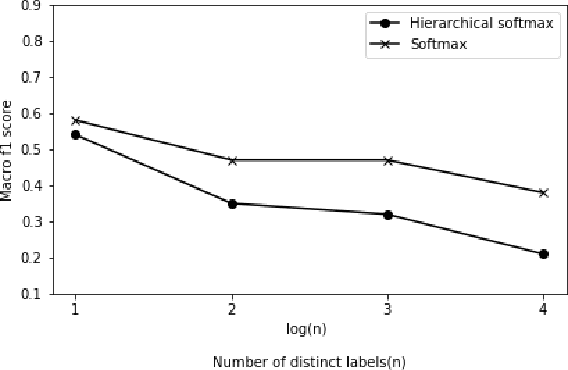

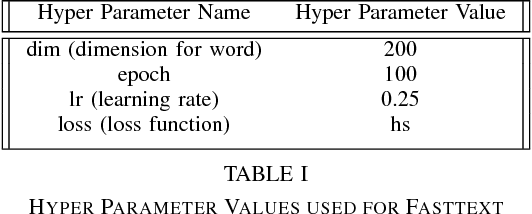

Typically, Softmax is used in the final layer of a neural network to get a probability distribution for output classes. But the main problem with Softmax is that it is computationally expensive for large scale data sets with large number of possible outputs. To approximate class probability efficiently on such large scale data sets we can use Hierarchical Softmax. LSHTC datasets were used to study the performance of the Hierarchical Softmax. LSHTC datasets have large number of categories. In this paper we evaluate and report the performance of normal Softmax Vs Hierarchical Softmax on LSHTC datasets. This evaluation used macro f1 score as a performance measure. The observation was that the performance of Hierarchical Softmax degrades as the number of classes increase.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge