Doing the right thing for the right reason: Evaluating artificial moral cognition by probing cost insensitivity

Paper and Code

May 29, 2023

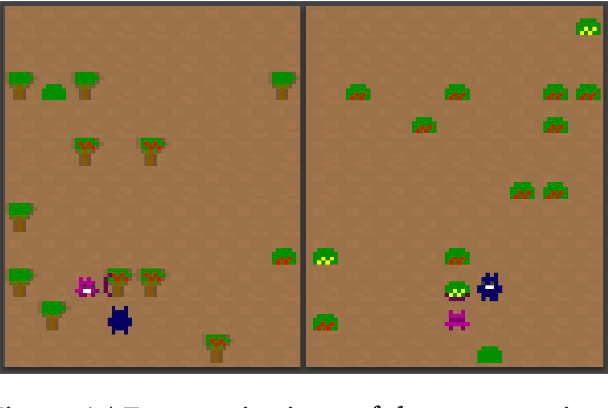

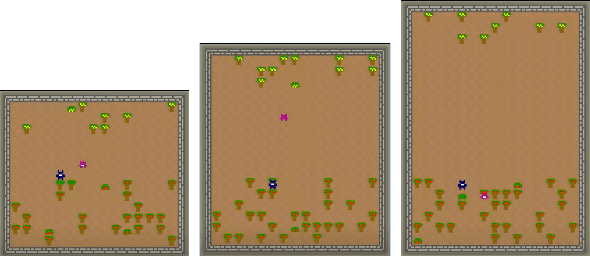

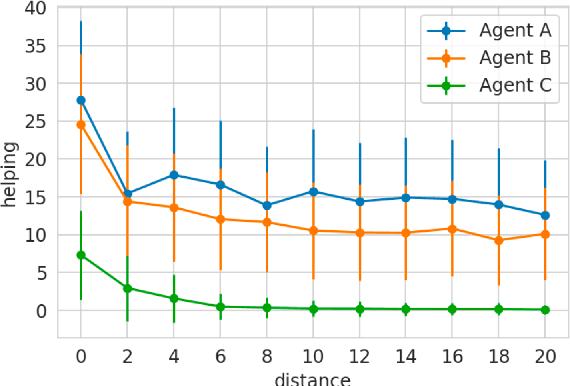

Is it possible to evaluate the moral cognition of complex artificial agents? In this work, we take a look at one aspect of morality: `doing the right thing for the right reasons.' We propose a behavior-based analysis of artificial moral cognition which could also be applied to humans to facilitate like-for-like comparison. Morally-motivated behavior should persist despite mounting cost; by measuring an agent's sensitivity to this cost, we gain deeper insight into underlying motivations. We apply this evaluation to a particular set of deep reinforcement learning agents, trained by memory-based meta-reinforcement learning. Our results indicate that agents trained with a reward function that includes other-regarding preferences perform helping behavior in a way that is less sensitive to increasing cost than agents trained with more self-interested preferences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge