Convergence of gradient descent for deep neural networks

Paper and Code

Mar 30, 2022

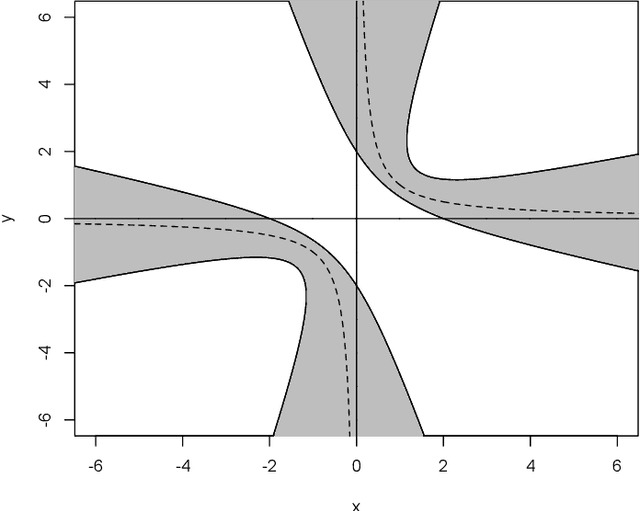

Optimization by gradient descent has been one of main drivers of the "deep learning revolution". Yet, despite some recent progress for extremely wide networks, it remains an open problem to understand why gradient descent often converges to global minima when training deep neural networks. This article presents a new criterion for convergence of gradient descent to a global minimum, which is provably more powerful than the best available criteria from the literature, namely, the Lojasiewicz inequality and its generalizations. This criterion is used to show that gradient descent with proper initialization converges to a global minimum when training any feedforward neural network with smooth and strictly increasing activation functions, provided that the input dimension is greater than or equal to the number of data points.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge