ContainerStress: Autonomous Cloud-Node Scoping Framework for Big-Data ML Use Cases

Paper and Code

Mar 18, 2020

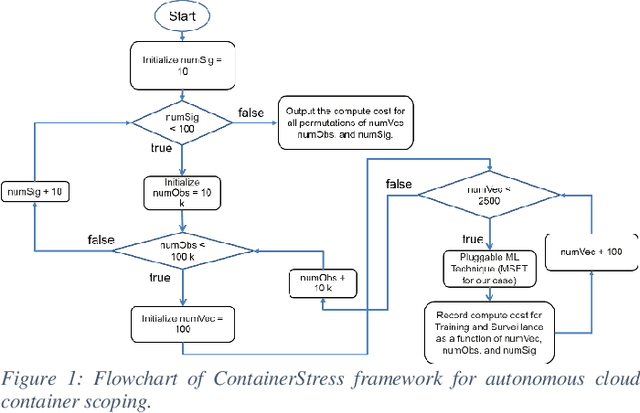

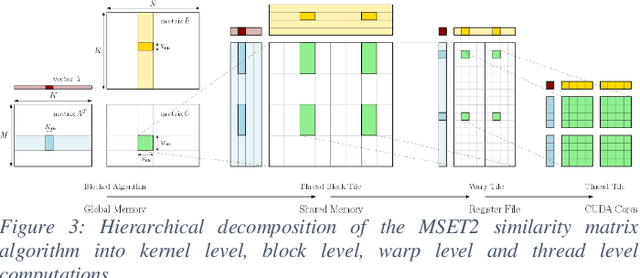

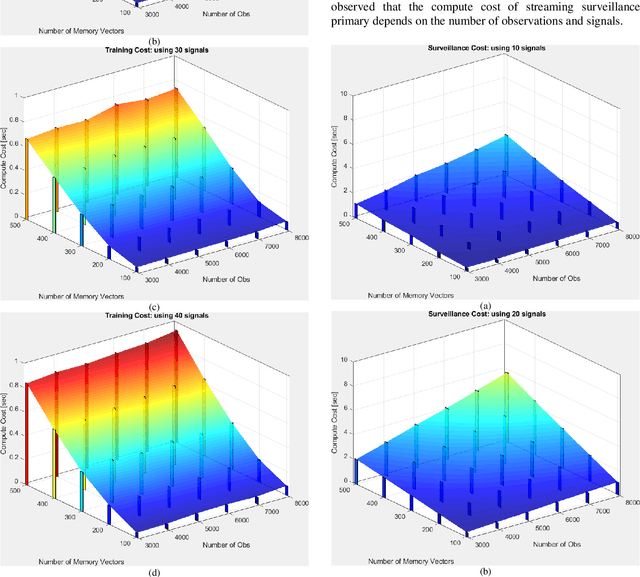

Deploying big-data Machine Learning (ML) services in a cloud environment presents a challenge to the cloud vendor with respect to the cloud container configuration sizing for any given customer use case. OracleLabs has developed an automated framework that uses nested-loop Monte Carlo simulation to autonomously scale any size customer ML use cases across the range of cloud CPU-GPU "Shapes" (configurations of CPUs and/or GPUs in Cloud containers available to end customers). Moreover, the OracleLabs and NVIDIA authors have collaborated on a ML benchmark study which analyzes the compute cost and GPU acceleration of any ML prognostic algorithm and assesses the reduction of compute cost in a cloud container comprising conventional CPUs and NVIDIA GPUs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge