Comparing Correspondences: Video Prediction with Correspondence-wise Losses

Paper and Code

Apr 19, 2021

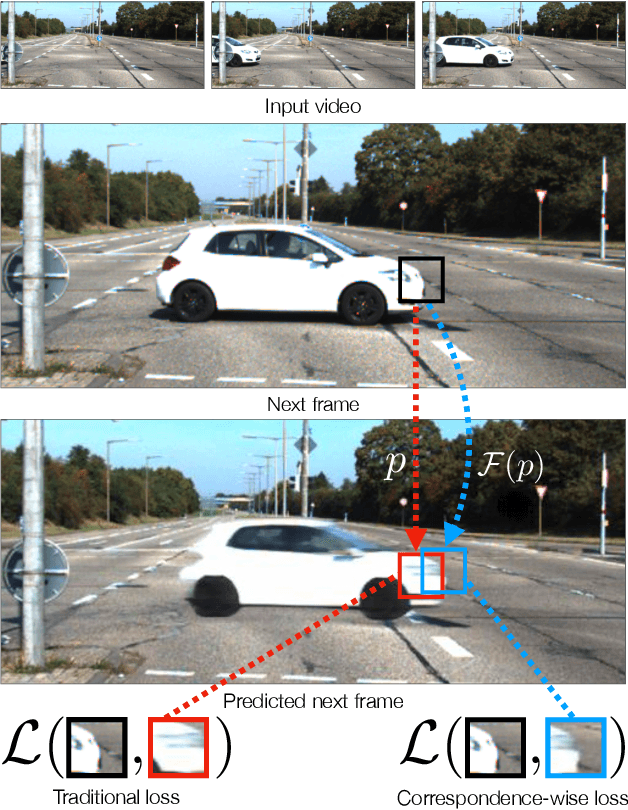

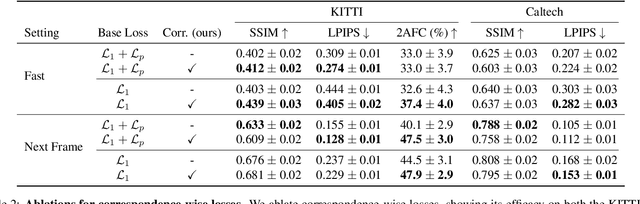

Today's image prediction methods struggle to change the locations of objects in a scene, producing blurry images that average over the many positions they might occupy. In this paper, we propose a simple change to existing image similarity metrics that makes them more robust to positional errors: we match the images using optical flow, then measure the visual similarity of corresponding pixels. This change leads to crisper and more perceptually accurate predictions, and can be used with any image prediction network. We apply our method to predicting future frames of a video, where it obtains strong performance with simple, off-the-shelf architectures.

* Website at http://dangeng.github.io/CorrWiseLosses

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge