Communication-Efficient Weighted Sampling and Quantile Summary for GBDT

Paper and Code

Sep 17, 2019

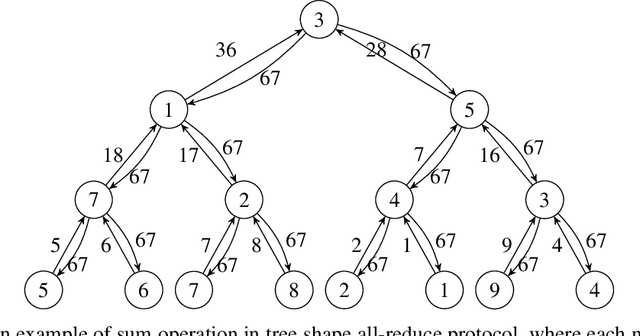

Gradient boosting decision tree (GBDT) is a powerful and widely-used machine learning model, which has achieved state-of-the-art performance in many academic areas and production environment. However, communication overhead is the main bottleneck in distributed training which can handle the massive data nowadays. In this paper, we propose two novel communication-efficient methods over distributed dataset to mitigate this problem, a weighted sampling approach by which we can estimate the information gain over a small subset efficiently, and distributed protocols for weighted quantile problem used in approximate tree learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge