Coconditional Autoencoding Adversarial Networks for Chinese Font Feature Learning

Paper and Code

Dec 12, 2018

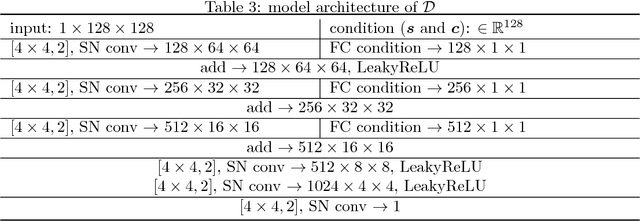

In this work, we propose a novel framework named Coconditional Autoencoding Adversarial Networks (CocoAAN) for Chinese font learning, which jointly learns a generation network and two encoding networks of different feature domains using an adversarial process. The encoding networks map the glyph images into style and content features respectively via the pairwise substitution optimization strategy, and the generation network maps these two kinds of features to glyph samples. Together with a discriminative network conditioned on the extracted features, our framework succeeds in producing realistic-looking Chinese glyph images flexibly. Unlike previous models relying on the complex segmentation of Chinese components or strokes, our model can "parse" structures in an unsupervised way, through which the content feature representation of each character is captured. Experiments demonstrate our framework has a powerful generalization capacity to other unseen fonts and characters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge