CNN+RNN Depth and Skeleton based Dynamic Hand Gesture Recognition

Paper and Code

Jul 22, 2020

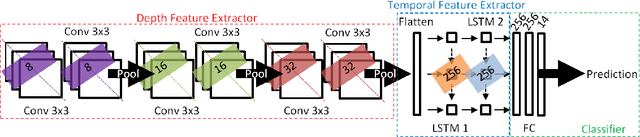

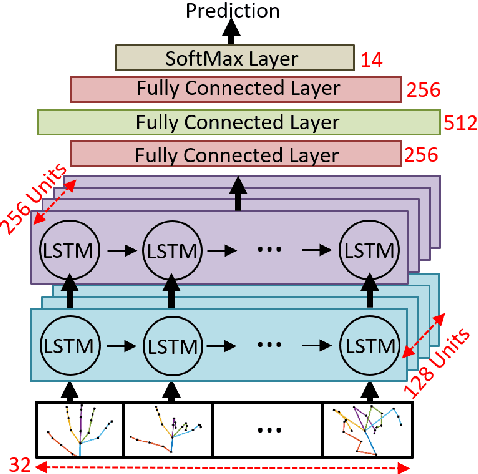

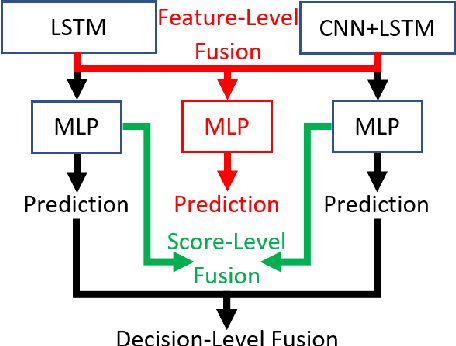

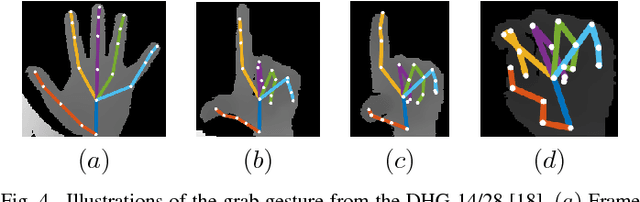

Human activity and gesture recognition is an important component of rapidly growing domain of ambient intelligence, in particular in assisting living and smart homes. In this paper, we propose to combine the power of two deep learning techniques, the convolutional neural networks (CNN) and the recurrent neural networks (RNN), for automated hand gesture recognition using both depth and skeleton data. Each of these types of data can be used separately to train neural networks to recognize hand gestures. While RNN were reported previously to perform well in recognition of sequences of movement for each skeleton joint given the skeleton information only, this study aims at utilizing depth data and apply CNN to extract important spatial information from the depth images. Together, the tandem CNN+RNN is capable of recognizing a sequence of gestures more accurately. As well, various types of fusion are studied to combine both the skeleton and depth information in order to extract temporal-spatial information. An overall accuracy of 85.46% is achieved on the dynamic hand gesture-14/28 dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge