Can large language models be privacy preserving and fair medical coders?

Paper and Code

Dec 07, 2024

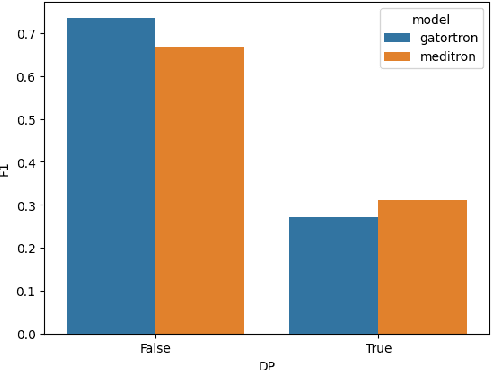

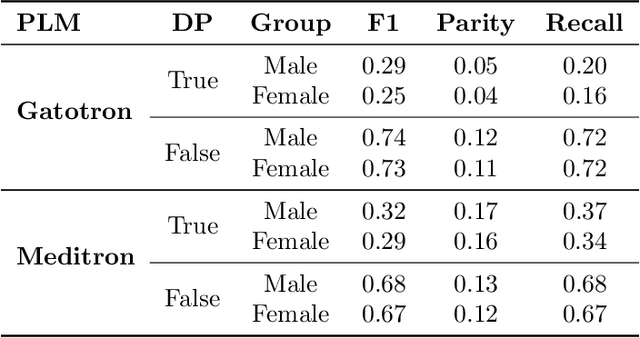

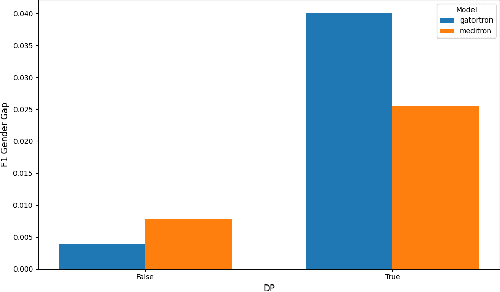

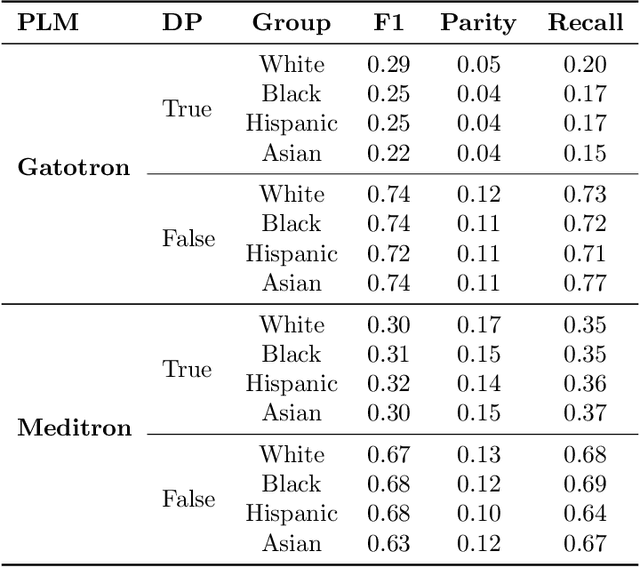

Protecting patient data privacy is a critical concern when deploying machine learning algorithms in healthcare. Differential privacy (DP) is a common method for preserving privacy in such settings and, in this work, we examine two key trade-offs in applying DP to the NLP task of medical coding (ICD classification). Regarding the privacy-utility trade-off, we observe a significant performance drop in the privacy preserving models, with more than a 40% reduction in micro F1 scores on the top 50 labels in the MIMIC-III dataset. From the perspective of the privacy-fairness trade-off, we also observe an increase of over 3% in the recall gap between male and female patients in the DP models. Further understanding these trade-offs will help towards the challenges of real-world deployment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge