Anchors Based Method for Fingertips Position Estimation from a Monocular RGB Image using Deep Neural Network

Paper and Code

May 14, 2020

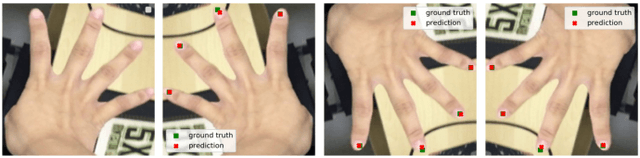

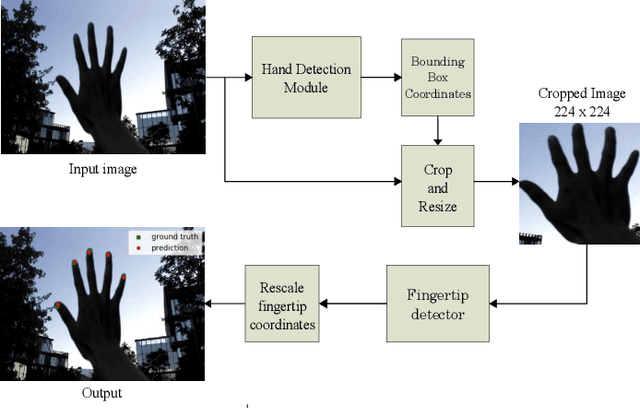

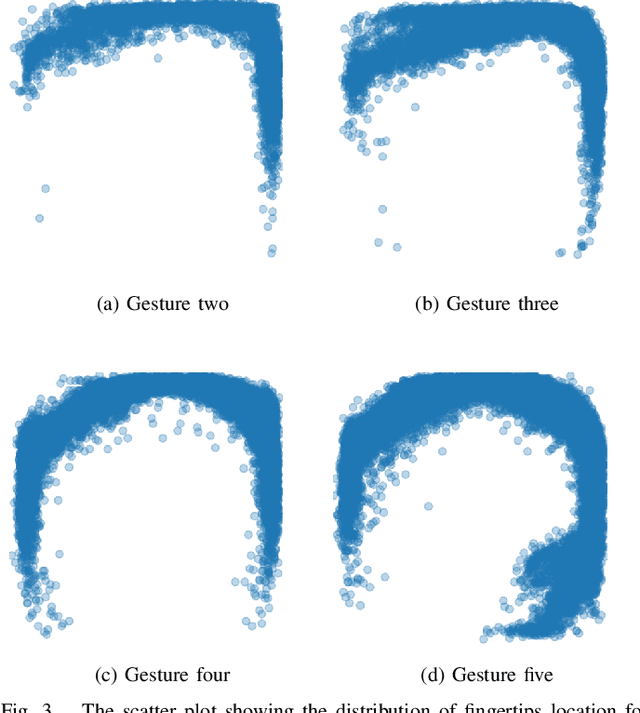

In Virtual, augmented, and mixed reality, the use of hand gestures is increasingly becoming popular to reduce the difference between the virtual and real world. The precise location of the fingertip is essential/crucial for a seamless experience. Much of the research work is based on using depth information for the estimation of the fingertips position. However, most of the work using RGB images for fingertips detection is limited to a single finger. The detection of multiple fingertips from a single RGB image is very challenging due to various factors. In this paper, we propose a deep neural network (DNN) based methodology to estimate the fingertips position. We christened this methodology as an Anchor based Fingertips Position Estimation (ABFPE), and it is a two-step process. The fingertips location is estimated using regression by computing the difference in the location of a fingertip from the nearest anchor point. The proposed framework performs the best with limited dependence on hand detection results. In our experiments on the SCUT-Ego-Gesture dataset, we achieved the fingertips detection error of 2.3552 pixels on a video frame with a resolution of $640 \times 480$ and about $92.98\%$ of test images have average pixel errors of five pixels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge