An XAI Approach to Deep Learning Models in the Detection of Ductal Carcinoma in Situ

Paper and Code

Jun 27, 2021

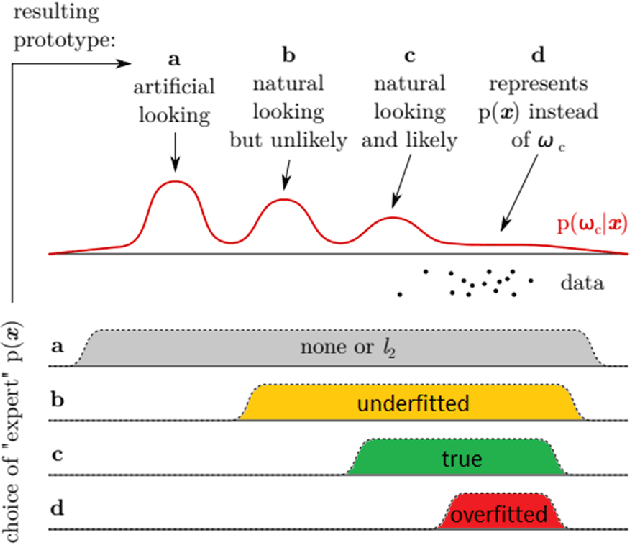

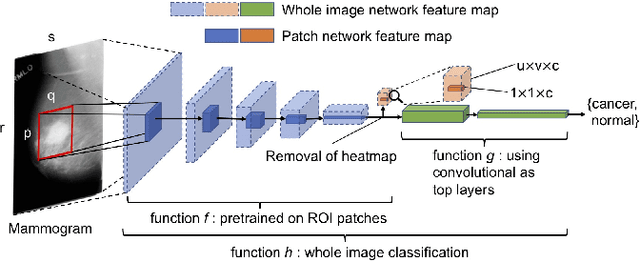

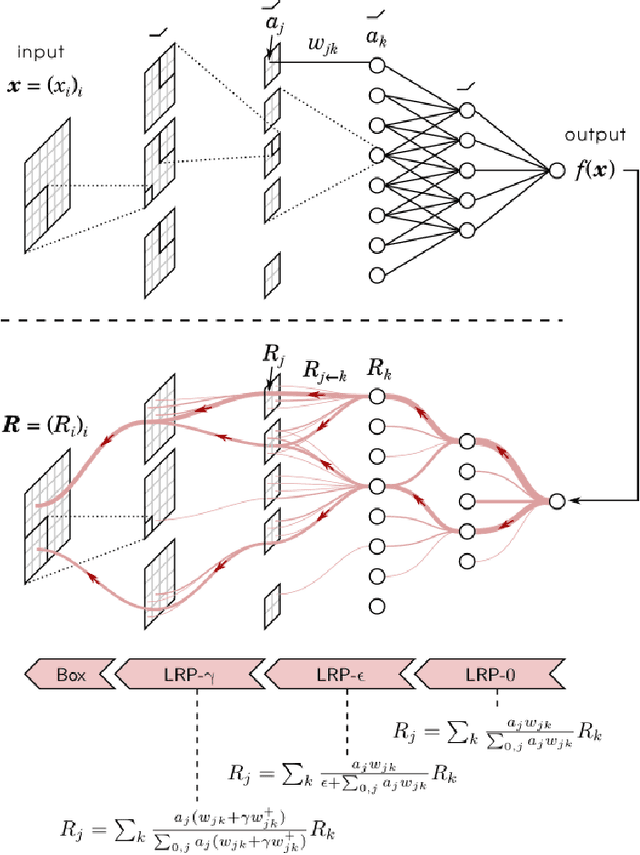

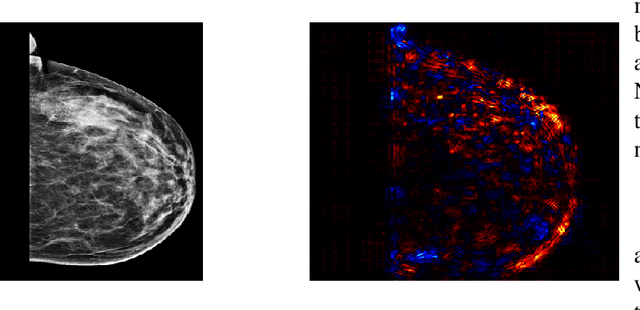

During the last decade or so, there has been an insurgence in the deep learning community to solve health-related issues, particularly breast cancer. Following the Camelyon-16 challenge in 2016, several researchers have dedicated their time to build Convolutional Neural Networks (CNNs) to help radiologists and other clinicians diagnose breast cancer. In particular, there has been an emphasis on Ductal Carcinoma in Situ (DCIS); the clinical term for early-stage breast cancer. Large companies have given their fair share of research into this subject, among these Google Deepmind who developed a model in 2020 that has proven to be better than radiologists themselves to diagnose breast cancer correctly. We found that among the issues which exist, there is a need for an explanatory system that goes through the hidden layers of a CNN to highlight those pixels that contributed to the classification of a mammogram. We then chose an open-source, reasonably successful project developed by Prof. Shen, using the CBIS-DDSM image database to run our experiments on. It was later improved using the Resnet-50 and VGG-16 patch-classifiers, analytically comparing the outcome of both. The results showed that the Resnet-50 one converged earlier in the experiments. Following the research by Montavon and Binder, we used the DeepTaylor Layer-wise Relevance Propagation (LRP) model to highlight those pixels and regions within a mammogram which contribute most to its classification. This is represented as a map of those pixels in the original image, which contribute to the diagnosis and the extent to which they contribute to the final classification. The most significant advantage of this algorithm is that it performs exceptionally well with the Resnet-50 patch classifier architecture.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge