Aligning Intraobserver Agreement by Transitivity

Paper and Code

Sep 29, 2020

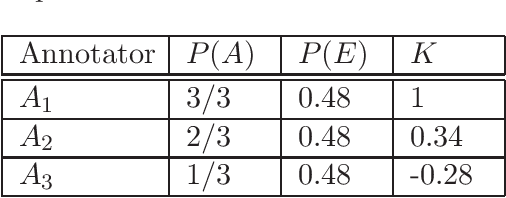

Annotation reproducibility and accuracy rely on good consistency within annotators. We propose a novel method for measuring within annotator consistency or annotator Intraobserver Agreement (IA). The proposed approach is based on transitivity, a measure that has been thoroughly studied in the context of rational decision-making. The transitivity measure, in contrast with the commonly used test-retest strategy for annotator IA, is less sensitive to the several types of bias introduced by the test-retest strategy. We present a representation theorem to the effect that relative judgement data that meet transitivity can be mapped to a scale (in terms of measurement theory). We also discuss a further application of transitivity as part of data collection design for addressing the problem of the quadratic complexity of data collection of relative judgements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge