Adaptive Combination of a Genetic Algorithm and Novelty Search for Deep Neuroevolution

Paper and Code

Sep 08, 2022

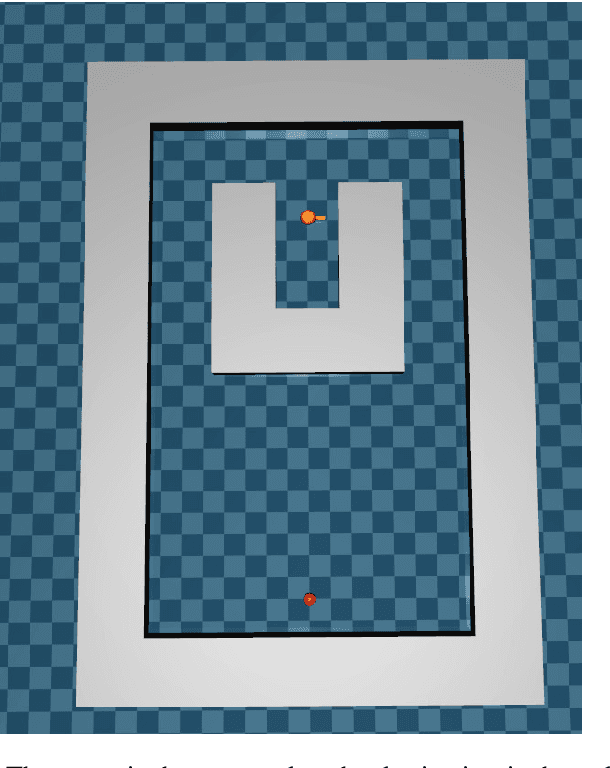

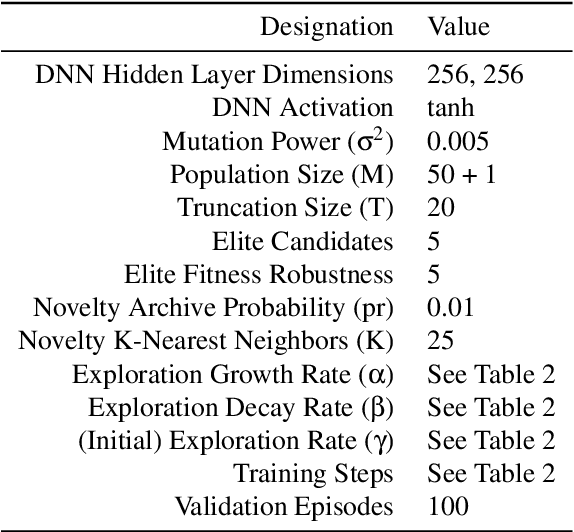

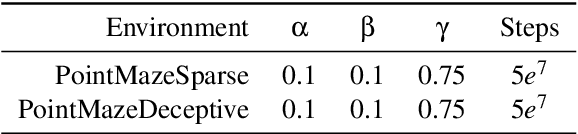

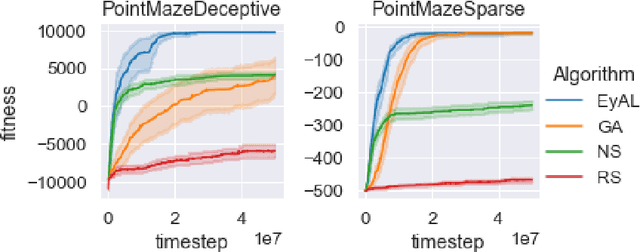

Evolutionary Computation (EC) has been shown to be able to quickly train Deep Artificial Neural Networks (DNNs) to solve Reinforcement Learning (RL) problems. While a Genetic Algorithm (GA) is well-suited for exploiting reward functions that are neither deceptive nor sparse, it struggles when the reward function is either of those. To that end, Novelty Search (NS) has been shown to be able to outperform gradient-following optimizers in some cases, while under-performing in others. We propose a new algorithm: Explore-Exploit $\gamma$-Adaptive Learner ($E^2\gamma AL$, or EyAL). By preserving a dynamically-sized niche of novelty-seeking agents, the algorithm manages to maintain population diversity, exploiting the reward signal when possible and exploring otherwise. The algorithm combines both the exploitation power of a GA and the exploration power of NS, while maintaining their simplicity and elegance. Our experiments show that EyAL outperforms NS in most scenarios, while being on par with a GA -- and in some scenarios it can outperform both. EyAL also allows the substitution of the exploiting component (GA) and the exploring component (NS) with other algorithms, e.g., Evolution Strategy and Surprise Search, thus opening the door for future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge