A Reinforcement Learning based Path Planning Approach in 3D Environment

Paper and Code

May 21, 2021

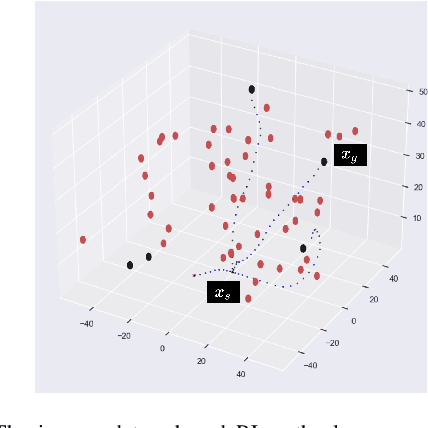

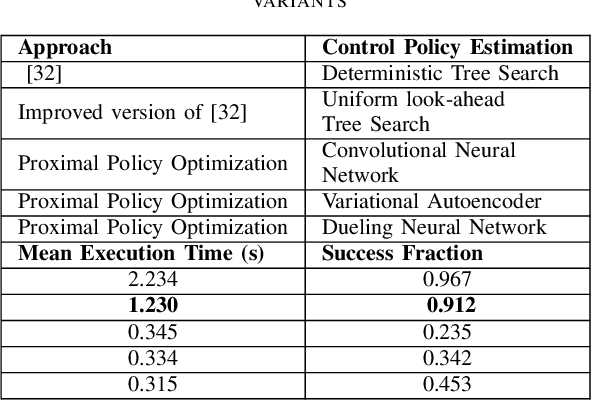

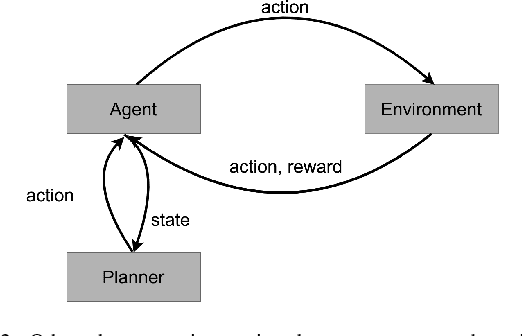

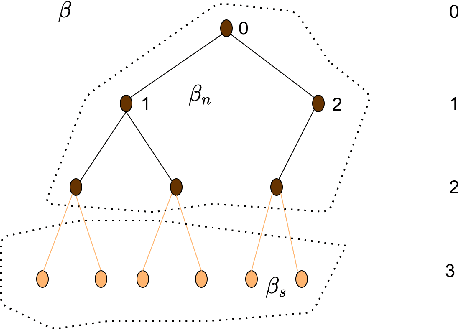

Optimal trajectory planning involves obstacles avoidance in which path planning is the key to success in optimal trajectory planning. Due to the computational demands, most of the path planning algorithms can not be employed for real-time based applications. Model-based Reinforcement Learning approaches for path planning got certain success in the recent past. Yet, most of such approaches do not have deterministic output due to the nature of those approaches. We analyzed several types of reinforcement learning-based approaches for path planning. One of them is a deterministic tree-based approach and the other two approaches are based on Q-learning and approximate policy gradient, respectively. We tested preceding approaches on two different type of simulators. Each of which consists of a set of random obstacles which could be changed or moved dynamically. After analysing the result and computation time, we concluded that the deterministic tree search approach provides a highly accurate result. However, the computational time is considerably higher than the other two approaches. Finally, the comparative results are provided in terms of accuracy and computational time as evidence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge