A Probabilistic Relaxation of the Two-Stage Object Pose Estimation Paradigm

Paper and Code

Jun 01, 2023

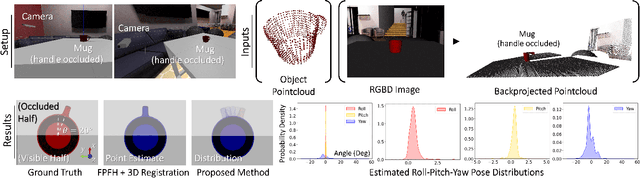

Existing object pose estimation methods commonly require a one-to-one point matching step that forces them to be separated into two consecutive stages: visual correspondence detection (e.g., by matching feature descriptors as part of a perception front-end) followed by geometric alignment (e.g., by optimizing a robust estimation objective for pointcloud registration or perspective-n-point). Instead, we propose a matching-free probabilistic formulation with two main benefits: i) it enables unified and concurrent optimization of both visual correspondence and geometric alignment, and ii) it can represent different plausible modes of the entire distribution of likely poses. This in turn allows for a more graceful treatment of geometric perception scenarios where establishing one-to-one matches between points is conceptually ill-defined, such as textureless, symmetrical and/or occluded objects and scenes where the correct pose is uncertain or there are multiple equally valid solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge