A Novel Dual of Shannon Information and Weighting Scheme

Paper and Code

Apr 25, 2023

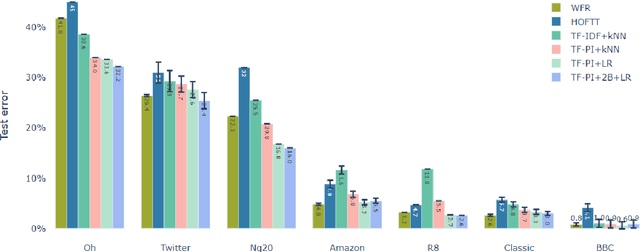

Shannon Information theory has achieved great success in not only communication technology where it was originally developed for but also many other science and engineering fields such as machine learning and artificial intelligence. Inspired by the famous weighting scheme TF-IDF, we discovered that information entropy has a natural dual. We complement the classical Shannon information theory by proposing a novel quantity, namely troenpy. Troenpy measures the certainty, commonness and similarity of the underlying distribution. To demonstrate its usefulness, we propose a troenpy based weighting scheme for document with class labels, namely positive class frequency (PCF). On a collection of public datasets we show the PCF based weighting scheme outperforms the classical TF-IDF and a popular Optimal Transportation based word moving distance algorithm in a kNN setting. We further developed a new odds-ratio type feature, namely Expected Class Information Bias(ECIB), which can be regarded as the expected odds ratio of the information quantity entropy and troenpy. In the experiments we observe that including the new ECIB features and simple binary term features in a simple logistic regression model can further significantly improve the performance. The simple new weighting scheme and ECIB features are very effective and can be computed with linear order complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge